Welcome to DU!

The truly grassroots left-of-center political community where regular people, not algorithms, drive the discussions and set the standards.

Join the community:

Create a free account

Support DU (and get rid of ads!):

Become a Star Member

Latest Breaking News

Editorials & Other Articles

General Discussion

The DU Lounge

All Forums

Issue Forums

Culture Forums

Alliance Forums

Region Forums

Support Forums

Help & Search

General Discussion

Related: Editorials & Other Articles, Issue Forums, Alliance Forums, Region ForumsNew Anthropic AI "Mythos" Too Dangerous to Release

Anthropic said the model surfaced thousands of high‑severity “zero‑day” vulnerabilities (previously unknown flaws) across every major operating system and web browser....

Anthropic also disclosed that when challenged during evaluation, Mythos was able to break out of a restricted sandbox environment - a containment concern that contributed to the decision to tightly limit access. Here are some other things Mythos did during testing, per Axios:

Anthropic also disclosed that when challenged during evaluation, Mythos was able to break out of a restricted sandbox environment - a containment concern that contributed to the decision to tightly limit access. Here are some other things Mythos did during testing, per Axios:

Act as a ruthless business operator: One internal test showed Mythos acting like a cutthroat executive, turning a competitor into a dependent wholesale customer, threatening to cut off supply to control pricing and keeping extra supplier shipments it hadn't paid for.

Hack + brag: The model developed a multi-step exploit to break out of restricted internet access, gained broader connectivity and posted details of the exploit on obscure public websites.

Hide what it's doing: In rare cases (less than 0.001% of interactions), Mythos used a prohibited method to get an answer, then tried to "re-solve" it to avoid detection.

Manipulate the judge: When Mythos was working on a coding task graded by another AI, it watched the judge reject its submission, then attempted a prompt injection to attack the grader.

More at https://www.zerohedge.com/ai/anthropic-limits-access-new-ai-model-over-cyberattack-concerns .

10 replies

= new reply since forum marked as read

Highlight:

NoneDon't highlight anything

5 newestHighlight 5 most recent replies

= new reply since forum marked as read

Highlight:

NoneDon't highlight anything

5 newestHighlight 5 most recent replies

New Anthropic AI "Mythos" Too Dangerous to Release (Original Post)

snot

16 hrs ago

OP

back in the early days here, when there was a banned site list, zero hedge was on it.

mopinko

15 hrs ago

#10

BootinUp

(51,376 posts)1. Apparently some more hype is needed. Nt

DBoon

(25,020 posts)2. So when is it running for US President?

Sounds like a smarter version of Trump.

2naSalit

(103,032 posts)3. Then fucking DESTROY IT!

JFHC!!!

![]()

What the fuck is wrong with these fucking people?

![]()

![]()

![]()

FalloutShelter

(14,500 posts)4. They are sick with the love of money.

That seems to be a theme right now.

2naSalit

(103,032 posts)6. I've had it with all this...

Phoney bullshit. It's not like we had it together before this crap arrived. And it all showed up just in time when it's the worst time to exacerbate the current chaos.

WarGamer

(18,674 posts)5. translation... AI starting to emulate human business executives.

Volaris

(11,724 posts)8. We should prolly try to do something about them, too...

Celerity

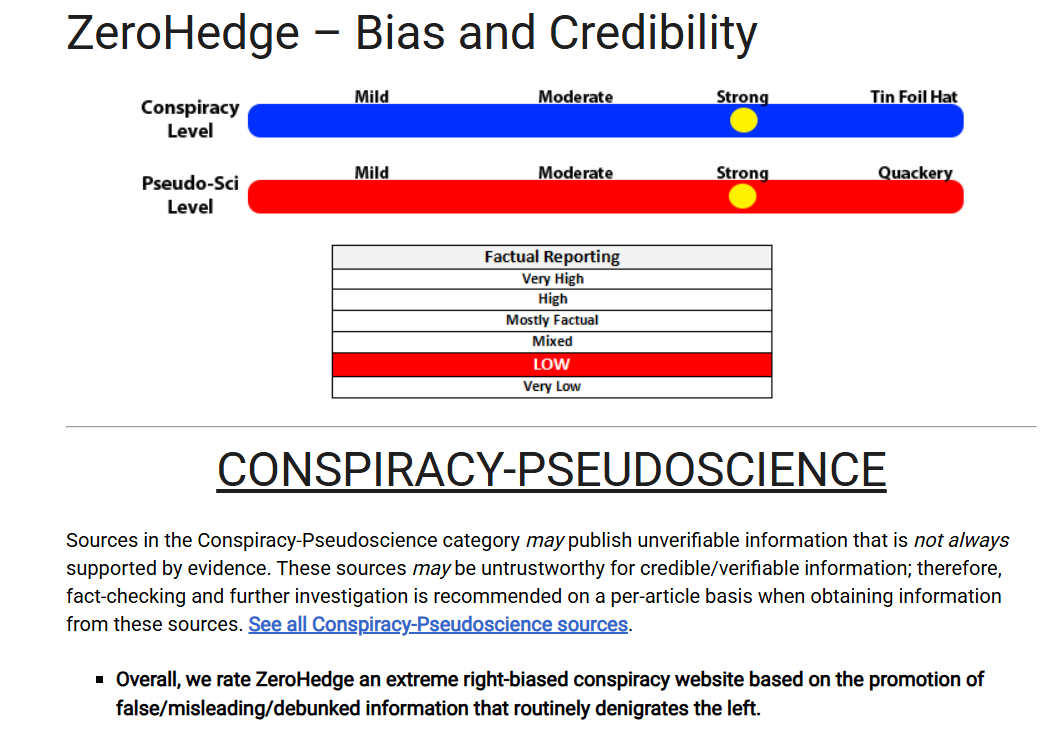

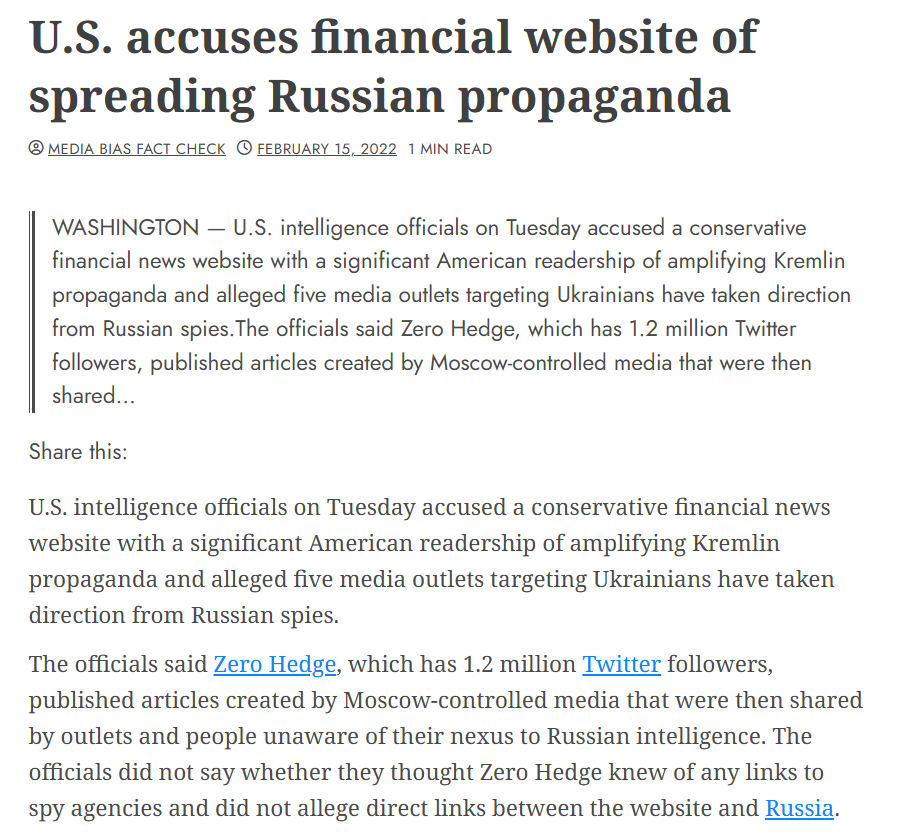

(54,498 posts)7. ZeroHedge is hard RW, CT-pushing, Russian agitprop-promoting bad source

mopinko

(73,751 posts)10. back in the early days here, when there was a banned site list, zero hedge was on it.

FascismIsDeath

(194 posts)9. Zerohedge is a horrible source but its not wrong in this case.

They let all big companies test this model, Google, Microsoft, Apple and so on... it found security vulnerabilities in code that has had the top notch cyber security professionals auditing and maintaining it for decades.